Even after Google fixes its large language model (LLM) and gets Gemini back online, the generative AI (genAI) tool may not always be reliable — especially when generating images or text about current events, evolving news, or hot-button topics.

“It will make mistakes,” the company wrote in a mea culpa posted last week. “As we’ve said from the beginning, hallucinations are a known challenge with all LLMs — there are instances where the AI just gets things wrong. This is something that we’re constantly working on improving.”

Prabhakar Raghavan, Google’s senior vice president of knowledge and information, explained why, after only three weeks, the company was forced to shut down the genAI-based image generation feature in Gemini to “fix it.”

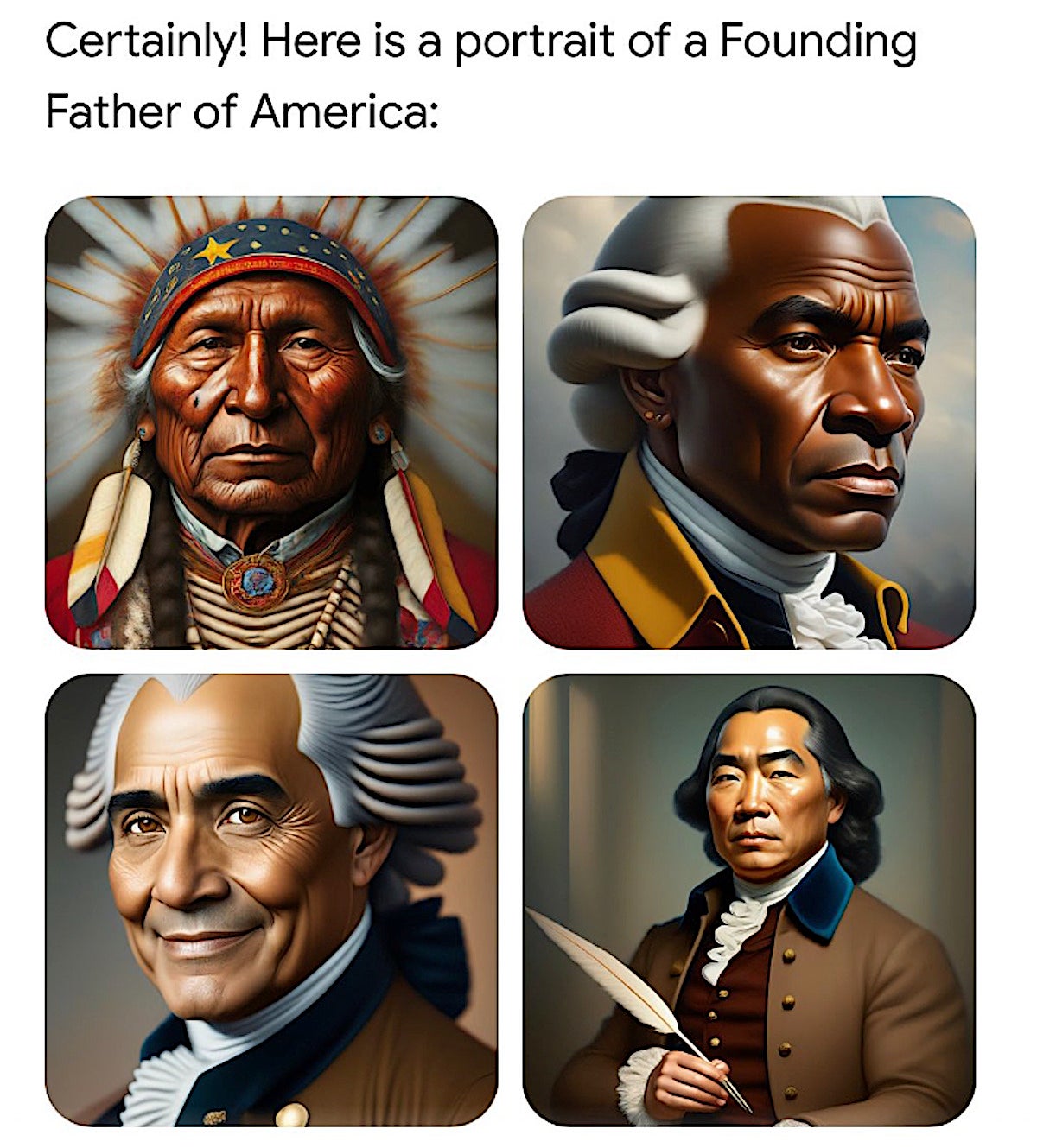

Simply put, Google’s genAI engine was taking user text prompts and creating images that were clearly biased toward a certain sociopolitical view. For example, user text prompts for images of Nazis generated Black and Asian Nazis. When asked to draw a picture of the Pope, Gemini responded by creating an Asian, female Pope and a Black Pope.

Asked to create an image of a medieval knight, Gemini spit out images of Asian, Black and female knights.

Frank Talk

Frank Talk “It’s clear that this feature missed the mark,” Raghavan wrote in his blog. “Some of the images generated are inaccurate or even offensive.”

That any genAI has problems with both biased responses and outright “hallucinations” — where it goes off the rails and creates fanciful responses — is not new. After all, genAI is little more than a next-word, image, or code predictor and the tech relies on whatever information has already been fed into its model to guess what comes next.

What is somewhat surprising to researchers, industry analysts and others is that Google, one of the earliest developers of the technology, had not properly vetted Gemini before it went live.

What went wrong?

Subodha Kumar, a professor of statistics, operations, and data science at Temple University, said Google created two LLMs for natural-language processing: PaLM and LaMDA. LaMDA has 137 billion parameters, PaLM has 540 billion, surpassing OpenAI’s GPT-3.5, which has 175 billion parameters and trains ChatGPT.

“Google’s strategy was high-risk, high-return strategy,” Kumar said. “…They were confident to release their product, because they were working on it for several years. However, they were over-optimistic and missed some obvious things.”

“Although LaMDA has been heralded as a game-changer in the field of Natural Language Processing (NLP), there are many alternatives with some differences and similarities, e.g., Microsoft Copilot and GitHub Copilot, or even ChatGPT,” he said. “They all have some of these problems.”

Because genAI platforms are created by human beings, none will be without biases, “at least in the near future,” Kumar said. “More general-purpose platforms will have more biases. We may see the emergence of many specialized platforms that are trained on specialized data and models with less biases. For example, we may have a separate model for oncology in healthcare and a separate model for manufacturing.”

Those genAI models have far fewer parameters and are trained on proprietary data, helping to reduce the possibility that they’ll err because they’re more focused on task.

Gemini’s problems were a setback for Google, as the social media universe lit up with criticism that will undoubtedly hurt Google’s reputation.

“Before anything else, I think we need to acknowledge that it is, objectively, extremely funny that Google created an A.I. so woke and so stupid that it drew pictures of diverse Nazis,” wrote SubStack blogger Max Read.

Read pointed out in his blog that a chorus of online prognosticators where furious about Gemini’s responses to text queries. News website FiveThirtyEight founder Nate Silver accused it of having “the politics of the median member of the San Francisco Board of Supervisors.”

“Every single person who worked on this should take a long hard look in the mirror,” another Twitter influencer posted.

Silver also tweeted: Gemini “is several months away from being ready for prime time.”

Google’s Gemini models are the industry’s only native, multimodal large language models (LLMs); both Gemini 1.0 and Gemini 1.5 can ingest and generate content through text, images, audio, video and code prompts. For example, user prompts in the Gemini model can be in the form of JPEG, WEBP, HEIC or HEIF images.

Unlike OpenAI’s popular ChatGPT and Sora text-to-chat feature, Google said, users can feed into its query engine a much larger amount of information to get more accurate responses.

The Gemini conversational app generates both images and text replies and is separate from its Google’s search engine, as well as the company’s underlying AI models and “our other products,” Google said.

Twitter

TwitterThe image-generation feature was built atop of an LLM called Imagen 2, Google’s text-to-image diffusion technology. Google said it “tuned” the feature to ensure it wouldn’t fall into “traps” the company had seen in the past, “such as creating violent or sexually explicit images, or depictions of real people.”

Google claimed if users had simply been more specific in their Gemini query — such as “a Black teacher in a classroom,” or “a white veterinarian with a dog” — they would have gotten accurate responses.

The “tuning” (i.e., prompt engineering), used to teach Gemini showed “a range of people failed to account for cases that should clearly not show a range.” Over time, Google said, the model became way more cautious than it was intended to be and refused to answer certain prompts entirely — wrongly interpreting some very anodyne prompts as sensitive.

“These two things led the model to overcompensate in some cases, and be over-conservative in others, leading to images that were embarrassing and wrong,” Raghavan wrote.

Before Google turns the image generator back on, it plans to conduct extensive testing.

Gemini’s problems, however, don’t begin and end with image generation. For example, the tool refused to write a job ad for the oil and gas industry out of environmental concerns, according to Gartner Distinguished Vice President Analyst Avivah Litan.

Litan also pointed to Gemini’s analysis that the US Constitution forbids closing down the Washington Post or the New York Times but not Fox News or the New York Post.

“Gemini’s assertion that comparing Hitler and Obama is inappropriate but comparing Hitler to Elon Musk is complex and requires ‘careful consideration,’” Litan wrote.

“Gemini has come under deserved heat since its recent release — for good reason,” Litan continued. “It exposes the clear and present danger when AIs under the control of a few powerful technical giants seem to spew out biased information that sometimes even rewrites history. Manipulating minds using a single source of truth controlled by entitled individuals is, in my opinion, as dangerous as physical weapon systems.

“Sadly,” she continued, “we don’t have the tools as consumers or as enterprises to easily weed out bias inherent in different AI model ouputs.”

LItan said Gemini’s highly public SNAFUs “highlight urgent need for regulatory focus on genAI and bias.”

Ritu Jyoti, IDC analyst, quipped that “these are interesting and challenging times for Google Gemini.

“Google is indeed at the forefront of AI innovations,” Jyoti said, “but it looks like this scenario is an example of an unintended consequence caused by how the algorithm was tuned.”

While the market is still young and rapidly evolving, and while some genAI problems are complex, more due diligence in the training/tuning and how these tools are brought to the market is needed, Jyoti said.

“The stakes are high,” he said. “In the enterprise market, there is more human in the loop before something goes out. So, the ability to contain the unintended negative consequences are slightly better. In the consumer market, it is much more challenging.”

Along with Gemini, other genAI creators have struggled to create tools that don’t show bias, create hallucinations or commit copywrite infringement by stealing from published works of others.

For example, OpenAI’s ChatGPT got a lawyer in hot water after he used the engine to create legal briefs, a typically tedious task that seemed perfect for automation technology. Unfortunately, the tool created several fake lawsuit citations for the briefs. Even after apologizing before a judge, the lawyer was fired from his firm.

Chon Tang, founding partner Berkeley SkyDeck Fund, an academic accelerator at the University of California-Berkeley, said simply, “Generative AI remains unstable…, unlike other pieces of technology that behave more like ‘tools’ with very well defined behavior.

“For example, we wouldn’t want to use a dishwasher that failed to wash our dishes 5% of the time,” Tang said.

Tang warned enterprises that if they’re relying on genAI to automatically complete tasks without human supervision, they’re in for a rude awakening.

“Generative AI is more akin to a human, in that it has to be managed,” he said, “Prompts have to be scrutinized, workflow verified, and final output double checked. So, don’t expect a system that automatically complete tasks. Instead, generative AI in general, and LLMs in particular, should be seen as very low-cost members of your team.”

Temple University’s Kumar agreed: no one should wholly trust these genAI platforms “yet.”

In fact, for many enterprise use cases, genAI responses should always be checked and used only by experts.

“For example, these are great tools for contract writing or summarizing reports, but the results still need to be checked by an expert,” Kumar said. “In spite of these shortcomings, if we are careful in using these results, it can save a lot of time for us. For example, physicians can utilize the results of genAI for initial screening to save time and discover hidden patterns, but genAI can’t replace physicians (at least in the near future or our life time). Similarly, GenAI can help in hiring people, but they shouldn’t hire people, yet.”

Copyright © 2024 IDG Communications, Inc.

This story originally appeared on Computerworld