In the days since the U.S. and Israel attacked Iran, bad actors have overwhelmed social media feeds with images and videos of missile attacks, destruction, combat and death.

Much of that viral imagery doesn’t show real or current events. Often, it’s generated with artificial intelligence or else it’s old footage misrepresented as if it’s new.

AI is creating more realistic content that is harder to debunk.

Here’s how to identify inaccurate content online.

Look out for recycled footage

Tal Hagin, an open-source intelligence analyst based in Israel, compiles misinformation and shares his findings on X daily. He said he’s seen people recycling footage of Iranian missile strikes in Israel in April 2024, October 2024 and June 2025 and claiming they show recent events.

One X user posted a photo of soldiers with their guns drawn. “JUST IN,” the caption read, “Lebanese Army and Hezbollah have captured multiple towns including Zarit in northern Israel and liberated it from Illegal IDF occupation forces during Iranian missiles strikes across Israel.”

But a reverse-image search revealed this image was posted in 2023, unrelated to any war. The photo, dated May 21, 2023, and uploaded by Reuters, showed Hezbollah members participating in a military exercise.

X users linked another video of a large explosion to the war in Iran, but it was from a 2015 chemical warehouse incident in Tianjin, China.

(Screenshot, left, from an X post on March 1; screenshot, right, from a BBC video uploaded Aug. 14, 2015)

One way to check for old footage is by taking a screenshot of the video, running it through reverse-image search tools such as Google and TinEye, and checking to see if it had been posted before February 2026.

AI-generated imagery contains inconsistencies, but be wary of false AI accusations

AI creations are getting more sophisticated, having graduated from when human hands with too many fingers were an AI imagery giveaway.

“These fabrications are becoming more convincing and harder for seasoned experts to identify,” Hagin said.

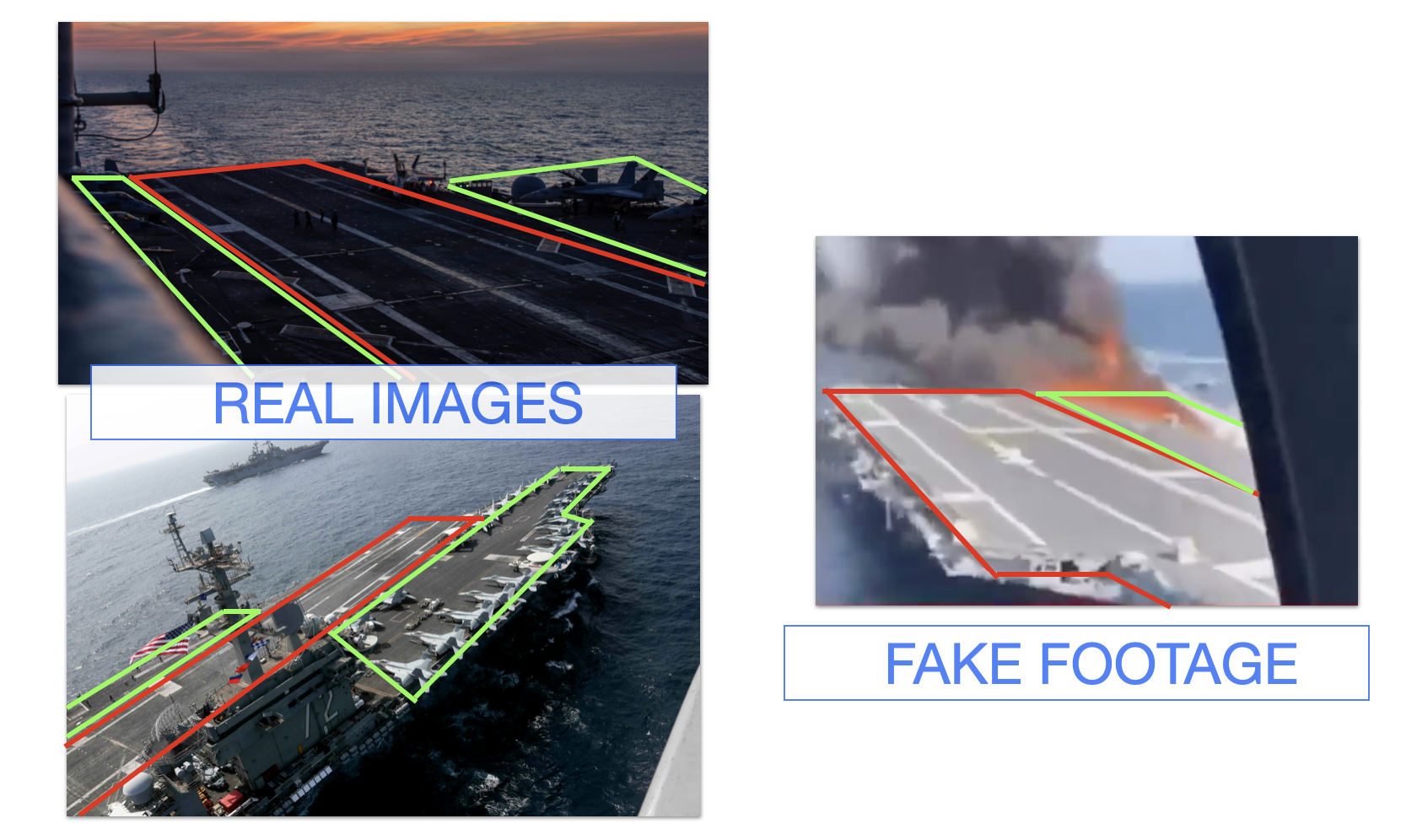

But there are still some clues. PolitiFact fact-checked posts claiming Iran sunk the U.S. aircraft carrier USS Abraham Lincoln. There were no credible reports proving that happened, and we found that the lines on the vessel in the video did not match those in real pictures of the carrier.

(Images from The Associated Press, left, show the USS Abraham Lincoln, on Jan. 22, 2026, and May 17, 2019. A screenshot from a supposed video of the carrier that appeared March 1 on X, right, depicted a different structure design, as our analysis of the runway images show. Red lines show the runways and green lines show the seacrafts’ auxiliary spaces.)

Looking closely at an image or video can also reveal some telltale signs such as inconsistent lighting, text distortions or unnatural textures, Hagin said.

If there are street or shop signs visible in the video, check Google Maps to see if such a location really exists. AI images are prone to showing illegible text or place names that aren’t real.

If there are no obvious signs of AI generation, look for news reports or other documentation that confirm the event really happened. Consider whether only one video or angle exists showing a supposedly high-profile event with many witnesses. For example, if the USS Abraham Lincoln was truly sunk, people close to the attack would have seen it and possibly documented it.

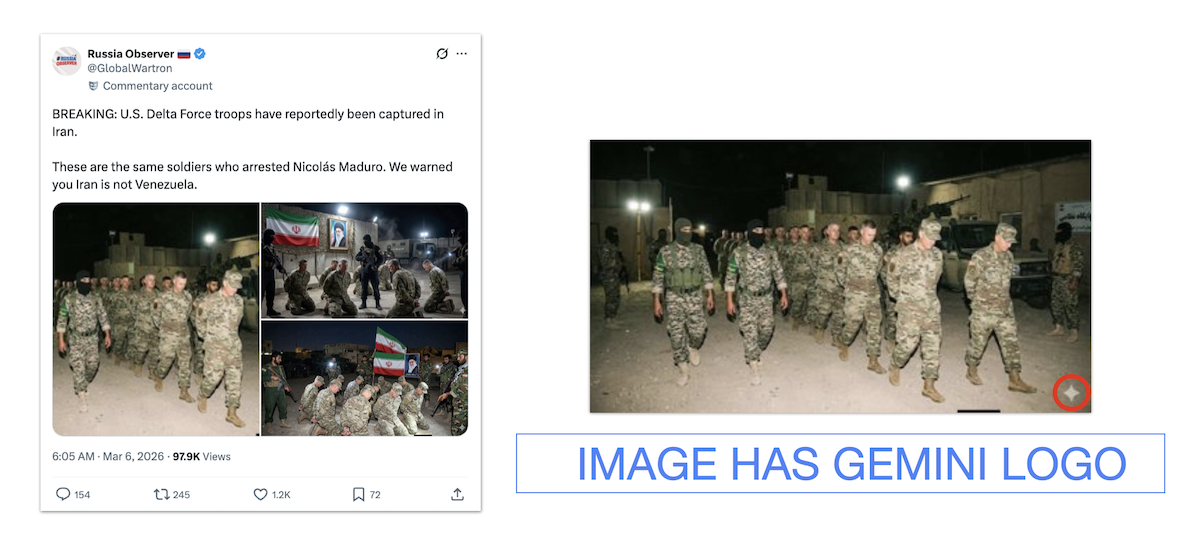

Some AI tools that create images, video or audio also have counterparts that detect whether a piece of content was made using that tool. Google’s Gemini can detect if an image contains SynthID, a watermark embedded in images generated by the tool. It’s detectable by Google’s technology but not visible to the human eye. You can upload a dubious image to Gemini and ask if it contains SynthID.

X users circulated images of U.S. soldiers supposedly captured by Iran. But some of these images still contained the Gemini logo on the bottom right, a sign they were AI-generated.

(Screenshot and image from X)

Some video generators, such as Google’s Veo, can only create clips up to eight seconds long. If a clip is around that length, or if a video is composed of clips that are only eight seconds or shorter, AI might have been involved.

Audio can also reveal whether a video was AI-generated. Background sounds may be suspiciously quiet for the setting. Voices may be heard, but are unintelligible.

Conversely, some posts claim that an image or video was generated or altered with AI when it wasn’t. An X account claimed The New York Times published an AI-generated image showing massive crowds gathered in Tehran Square after Iran named its new supreme leader. But the image was authentic, taken by a photojournalist.

Pick your sources wisely

Many of the accounts that share misinformation on X have blue check marks and the words “Iran” or “news” in their usernames. That doesn’t prove they are legitimate or that they provide accurate information.

Check if an account parading as a source of “news” or “updates” is affiliated with an official body or credible organization. Hagin said he’s observed accounts that change identities based on the latest crisis.

People also resort to asking chatbots whether something is true, but chatbots are limited and have no real ability to discern accuracy.

“Their accuracy depends on the quality and recency of the data they’re trained on, as well as how a question is phrased,” Hagin said. “Always check the sources provided by a chatbot; never rely solely on its summary or conclusions.”

Fact-checkers, including PolitiFact, Snopes, BBC Verify, Full Fact, Lead Stories and AFP, continuously debunk viral false information. When in doubt, check if journalists have reported the facts on a piece of war imagery.

This story originally appeared on PolitiFact